What counselors actually think about AI (it’s more nuanced than the headlines suggest)

If you’ve spent any time at a counseling conference or in a continuing education session lately, you’ve probably noticed that AI is dominating the conversation. It’s being debated, celebrated, dismissed, and cautiously tested, sometimes all in the same room.

We hear a lot from vendors, researchers who speculate on the future of behavioral health, and journalists. But counselors’ voices seem to be missing from much of that conversation. Yet they’re the people running practices, managing caseloads, writing notes at the end of long days, and holding space for clients through some of the hardest moments of their lives.

To hear from them directly, we surveyed more than 600 licensed counselors about AI: how it’s showing up in their practices today, what concerns them most, and where they expect AI to fit in their work by 2030. The findings are captured in our new research report, Beyond the Hype: What Counselors Really Want from AI.

What we found doesn’t fit neatly into either camp of the debate. Counselors aren’t cheerleaders for AI, but they also aren’t categorically opposed to it. Instead, they are thoughtful and discerning about how it fits into their workflow.

Counselors’ views on AI aren’t black and white

AI is one of the most talked about forces reshaping mental health right now. And yet, when we asked counselors how they’re actually using it, the picture is a lot more measured than the conversation suggests.

Today, 43% of counselors use at least one AI tool, mostly for the kind of work that happens around sessions rather than in them (documentation, scheduling, etc.). Others are watching carefully, waiting to see how their peers respond before making any moves.

And nearly everyone has a clear sense of where AI is welcome in their practice and where it isn’t. Counselors don’t approach AI with blanket resistance or with uncritical enthusiasm, but with the same careful judgment they bring to clinical decisions.

Where counselors draw the line

One of the sharpest findings in the report is how precisely counselors draw the line between acceptable and unacceptable AI use. Their boundary centers on the therapeutic relationship.

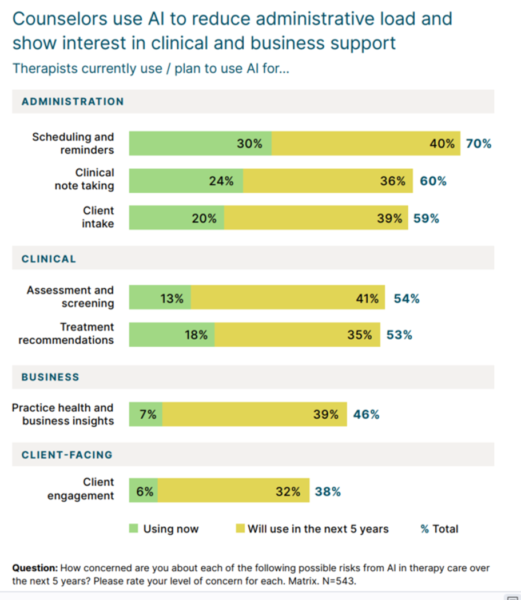

The closer AI gets to the clinical work that happens between a counselor and a client, the less welcome it is. Only 6% of counselors currently use AI for client engagement and 62% don’t expect to use it that way even five years from now.

That’s not surprising on its face. But the data behind it, where the line falls, how firm it is, and what would need to be true for it to shift, tells a more detailed story than you might expect. Counselors are clear about what it would take: ethical guidelines and transparency. The data in this report gets specific about what that would look like in practice.

Burnout is the context for everything

You can’t understand how counselors think about AI without understanding the conditions they’re working in. Burnout isn’t a background detail in this report, it’s the backdrop against which every adoption decision gets made.

81% of counselors report burnout, and half experience emotional exhaustion on a weekly basis. Most (87%) work in private practice, often without additional support. They’re managing hybrid caseloads, juggling multiple payer types, and handling documentation demands that multiply with every modality shift.

“I’m juggling [the demands of] providing therapy to clients, administrative tasks as well as raising a family.”

Jennifer Laubenstein, MSed, LPC, CSAC

When bandwidth is limited, the bar for adopting anything new gets higher. A tool has to genuinely reduce friction to earn a place in the workflow, not just promise to. That’s the lens through which counselors are evaluating AI right now. And it shapes which tools are gaining traction and which aren’t.

Trust is the variable that determines everything else

If there’s one word that runs through the entire report, it’s trust.

Counselors are asking hard questions before they adopt anything: Who has access to client data? What happens to session recordings? Can I override what the tool produces? Will my clients know it’s being used?

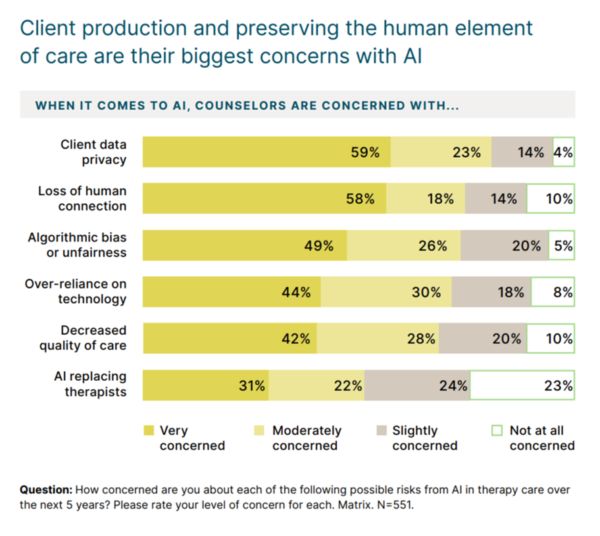

The data reflects that. The top concerns counselors named are client data privacy (82%), loss of human connection (76%), algorithmic bias (75%), and over-reliance on technology (74%).

What’s actually changing by 2030

When we asked counselors where they see AI fitting into their work five years from now, the answers were, in some cases, surprising. Planned use is rising across almost every category we asked about. But not evenly, and not without conditions.

Overall, 59% of counselors expect to be using AI by 2030, up from 43% today. The biggest growth areas are the ones closest to operations: scheduling and reminders are projected to reach 70% adoption, and clinical notetaking 60%.

Some of what counselors expect to change is encouraging. Some of what they say is unlikely to change, regardless of how the technology develops, is worth paying attention to if you care about where the profession is heading.

Why this report matters

We wrote Beyond the Hype because the conversation around AI in counseling needed grounding in what practitioners actually experience, not what the industry hopes they’ll eventually accept.

600+ counselors told us what they think. If you’re figuring out where you stand on AI in your practice, or where the counseling profession is headed, the data gives you some clear benchmarks.

Beyond the Hype: What Counselors Really Want from AI is part of a larger study. The full Future of Therapy report surveyed more than 1,300 licensed mental and behavioral health professionals across practice settings. This counselor edition zooms in on the specific realities of that segment.

The data, the direct quotes, and the full findings are all in the report. What you’ll take away is a clearer picture of where counselors actually stand, and a more honest foundation for whatever decisions come next.